Sightengine is an AI-driven content moderation platform offering REST APIs that automatically analyze and filter user-generated images, videos, and text for unsafe or unwanted content. It’s built for developers to integrate moderation into apps, social networks, marketplaces, and messaging platforms at scale.

Online communities and platforms struggle with harmful content like nudity, hate speech, violent imagery, scams, or spam. Manual moderation is slow, expensive, and error-prone. Sightengine automates this process with deep learning models, helping teams enforce safety rules faster and with fewer resources.

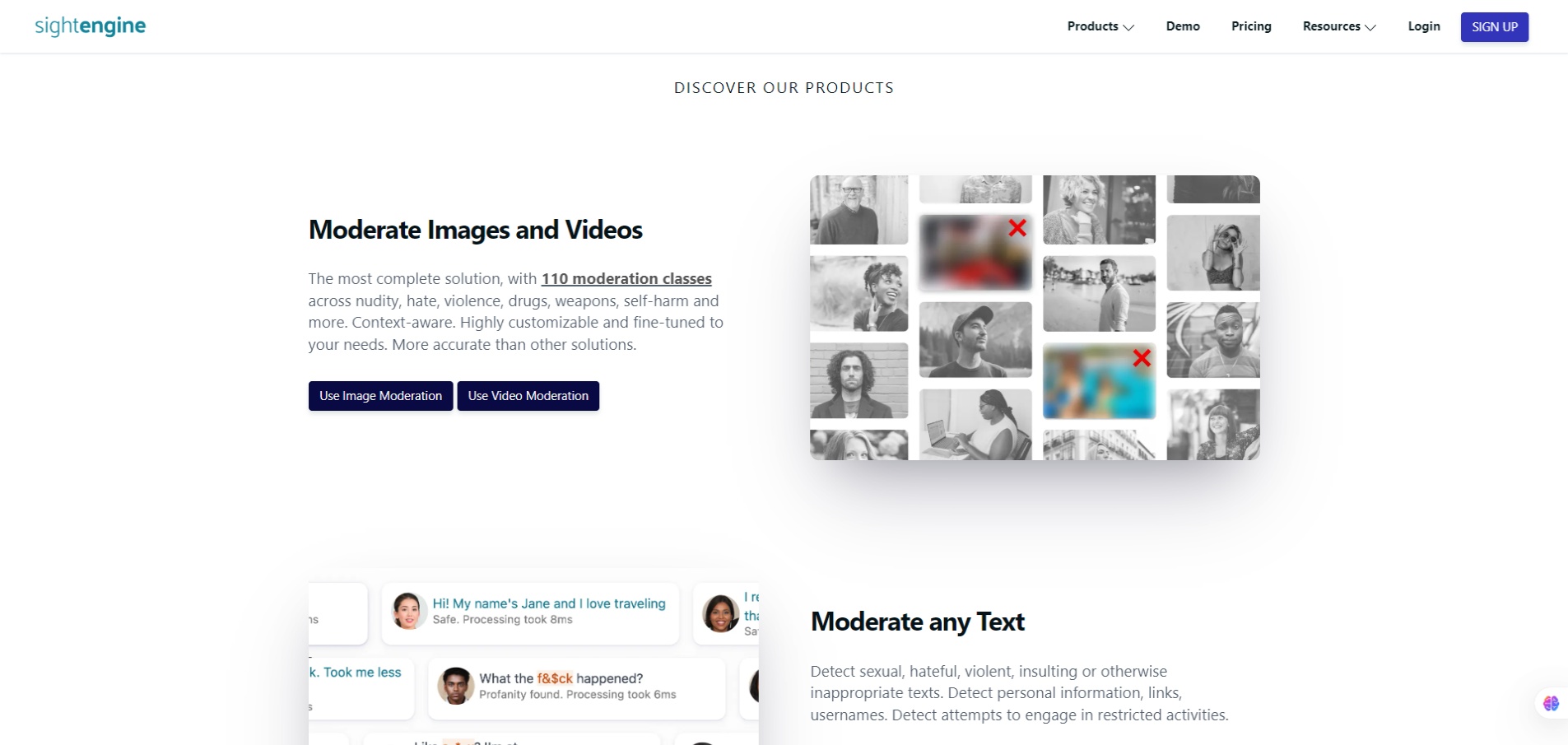

You make API calls with content (images/videos/text), and the service returns classification labels or risk scores across dozens of categories like nudity, weapons, alcohol, offensive gestures, or hate symbols. It supports image and video moderation (including live streams), text moderation, AI-generated image detection, and even deepfake detection. Documentation and SDKs help developers integrate quickly.