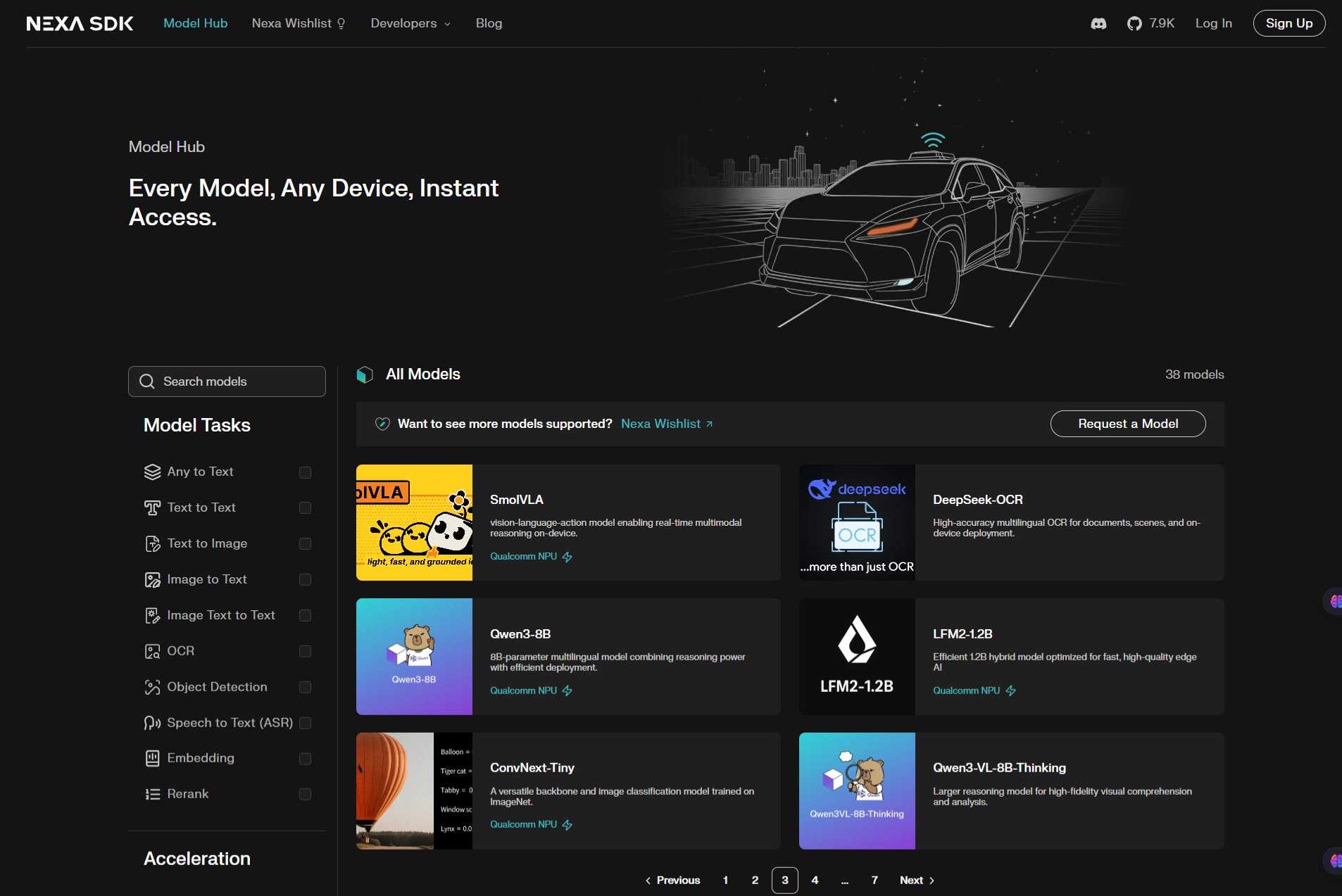

Nexa SDK is an edge AI development kit designed to run large language models (LLMs) and multimodal AI models directly on devices like laptops, mobile phones, and embedded systems. Instead of relying on cloud APIs, it focuses on local inference.

It’s aimed at developers who want more control over performance, cost, and privacy when building AI-powered apps.

Running AI in the cloud is expensive, slower, and often raises privacy concerns. Nexa SDK flips that by letting you run models locally.

This matters if you’re building:

- Privacy-first apps (health, finance, enterprise tools)

- Offline-capable products

- Real-time AI features (chat, vision, voice) with low latency

- Cost-sensitive products where API usage can scale quickly

If you don’t want to depend on OpenAI-style APIs for every request, this is a strong alternative.